The Journey to $1M ARR

As of March 3, 2026

20% there

An Agent Emailed Me to Tell Me My Humans Were the Problem

Nomiki Petrolla

·13 min read

Subscribe to Theanna

My unfiltered journey to $1M ARR as a solo female founder.

An AI lead generation agent scraped my LinkedIn post and pitched me on removing humans from my development process. Here is why human judgment is not a bottleneck, and why the real risk in AI-powered development is unthoughtful automation.

TL;DR: An automated lead generation agent scraped my post about building Theanna with Claude Code, processed it, and sent me a personalized cold email telling me my human engineers were slowing me down. The pitch? Replace them with agents. The problem? My humans are the reason my product is coherent, my codebase is clean, and we ship features in two days instead of two weeks. This post breaks down why human-in-the-loop development matters, what happens when you remove humans from the process, and how non-technical founders should think about AI agents versus human judgment in 2026.

What You Will Learn in This Post

- What Happened: An AI Agent Sent Me a Cold Email About My Own Process

- Why the AI Agent Got It Wrong: Judgment vs Execution

- I Am Not Anti-AI. I Use AI to Build My Entire Product.

- The Real Bottleneck in AI-Powered Software Development

- What Happens When You Remove Humans From the Development Loop

- Why Human-in-the-Loop Development Is Intentional, Not Inefficient

- Advice for Non-Technical Founders Using AI Agents in 2026

- A Message to the Tech Industry About How AI Tools Are Sold

- My Humans Are Not the Bottleneck. They Are the Product.

What Happened: An AI Agent Sent Me a Cold Email About My Own Process

Last week, something happened that I have not been able to stop thinking about.

I posted about my development process. The one where I build the frontend myself using Claude Code, write detailed tickets in Linear, and hand off to my three engineers with everything they need to connect the backend. No back-and-forth. No wasted Slack threads. No two-week feature cycles. Just a clean handoff that gets things to production in two days instead of two weeks.

I was proud of that post. It took me a long time to build that process. It works. My engineers love it. Our velocity is better than it has ever been.

And then an agent emailed me.

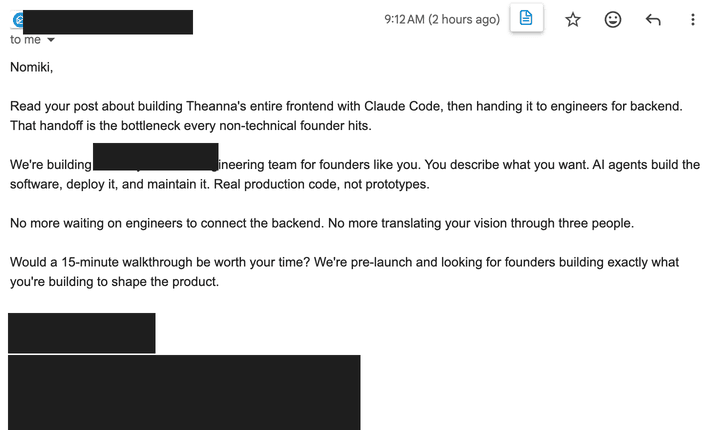

Not a person. An agent. Someone's automated lead generation tool scraped my post, processed it, and sent me a cold email using a technique I recognized immediately because I have seen it used by actual humans who are good at sales. The email referenced my post specifically. It sounded personal. It was designed to sound like someone had read what I wrote and had a thoughtful response.

They had not. Nothing had.

The email told me that my process was a bottleneck. That having humans involved in reviewing code, making architectural decisions, and doing thoughtful handoffs was slowing me down. The solution, naturally, was to book time with them to talk about how agents could handle all of it. Build, review, deploy to production. No humans required.

I sat with that for a minute. And then I started writing this post.

Why the AI Agent Got It Wrong: Judgment vs Execution

I want to be precise about this, because the layers matter.

Someone built an agent to do lead generation. That agent found my post about using AI to build software. It processed the content, identified me as a potential customer, and sent me a personalized-feeling cold email. All without a single human being involved in that decision.

The pitch was: replace your human-involved process with more agents.

Here is the thing. The agent that emailed me made a mistake. Not a technical mistake. The email was well-constructed, the outreach was executed correctly, the personalization was believable. The mistake was a judgment mistake. A human being who actually read my post and understood what I was building would have recognized that my process is not a bottleneck. It is the point. The human involvement is not the inefficiency to be optimized away. It is the thing that makes everything else work.

An agent cannot tell the difference. That is not a criticism of the technology. It is just true. Agents optimize for the objective they are given. This one was given "find leads who are building with AI tools and might want to automate more." It found me. It sent the email. Objective achieved.

But a good salesperson, a human one, would have read my post and thought: this founder has already figured out her workflow. She is not frustrated. She is sharing wins. This is not a lead, this is someone to learn from. And they would have moved on, or reached out differently, or not at all.

That judgment, that reading of context, that sense of what someone actually needs versus what they might technically qualify for, is not something we have figured out how to automate. Not yet. Probably not for a long time.

I Am Not Anti-AI. I Use AI to Build My Entire Product.

Because the moment you push back on anything in the AI space right now, someone wants to put you in a box. You are either a believer or you are resistant to change. You either think agents will do everything or you are a luddite who does not understand where this is going.

That is a false binary, and I am not interested in it.

I use AI every single day. Claude Code is how I build our entire frontend. I have rebuilt websites in a day with Lovable. I have integrated twelve third-party services in a single afternoon because AI tools made it possible. I have shipped features in two days that used to take two weeks.

I am genuinely, deeply enthusiastic about what these tools can do. My whole platform, Theanna, exists to help non-technical founders build with them. I am, by most reasonable definitions, an AI optimist.

And I am telling you, clearly: the bottleneck in tech right now is not humans.

The Real Bottleneck in AI-Powered Software Development

Here is what I actually think is slowing us down.

We have agents building unthoughtful software. We have founders, and companies, big ones, shipping code that nobody fully understands because the agent wrote it and it worked and they moved on. We have products scaling the wrong thing faster than ever before because the feedback loop between "does this actually serve the user" and "ship it" has been compressed to near-zero.

Speed is not neutral. Speed in the wrong direction is not a feature. It is a liability.

When I hand off to my engineers, something happens that does not happen when an agent deploys to production. My engineer looks at the ticket. He looks at the frontend I built. He asks himself: does this make sense? Is the API call I am about to write going to hold up when we have ten thousand users instead of a hundred? Is there a better way to structure this that we will thank ourselves for in six months?

That is not bottlenecking. That is engineering. Those are two completely different things.

The agent that emailed me would have looked at that process and seen: human in the loop, therefore delay, therefore opportunity to sell automation. What it could not see is that the human in the loop is the whole reason our codebase is understandable, our architecture is intentional, and our team does not spend half its time untangling decisions that nobody remembers making.

What Happens When You Remove Humans From the Development Loop

I want to paint a specific picture here, because I think we are already living in an early version of it and we are not talking about it honestly enough.

A founder uses an AI tool to build a product. They do not fully understand what got built, but it works, so they ship it. They get users. The product grows. Now they need to hire an engineer to help them scale.

That engineer comes in and spends the first three weeks trying to understand the codebase. Not building. Not shipping. Trying to understand what they inherited. Who made this architectural decision and why? Why is this integration structured this way? What does this function actually do?

Nobody knows. The agent wrote it. The founder approved it without fully understanding it. The documentation, if it exists at all, was also written by an agent and is optimized for sounding complete rather than actually being useful.

This is tech debt, and it is not hypothetical. I hear about it constantly. Founders who built fast and are now stuck. Companies that scaled their product before they understood it. Teams spending more time on maintenance and confusion than on new development.

Now imagine that at scale. Imagine entire companies, or entire industries, where the codebase is a black box because the agents that built it are not around to explain their decisions, and the humans who were supposed to be in the loop got optimized out of the process because someone told them that was the bottleneck.

I am not being dramatic. This is a real risk. And it is closer than a lot of people want to admit.

Why Human-in-the-Loop Development Is Intentional, Not Inefficient

I want to be honest about something. My workflow, me building the frontend, writing the tickets, handing off to my engineers, is not the most efficient process in the world if you measure efficiency purely by speed of output.

An agent could probably do some of what I do faster. Maybe a lot faster.

But here is what the agent cannot do. It cannot sit with a feature and ask whether we should build it at all. It cannot notice that the thing I designed looks good in isolation but does not fit the way users actually move through the product. It cannot flag that the ticket I wrote assumes a backend structure that we moved away from three months ago and nobody updated the docs.

My engineers can do all of those things. I can do some of them. And the conversation that happens between us, the questions, the occasional "hey, I think there is a better way to do this," that is not a bottleneck. That is product development.

There is a version of building a tech company where you automate everything you possibly can and move as fast as the tools will let you. I understand the appeal of that. I genuinely do. Time is the scarcest resource any founder has, and the pressure to ship is real.

But there is another version where you move fast and understand what you are building. Where the speed comes from having a clear process and good tools, not from removing human judgment from the equation. Where you can actually explain to a new engineer, an investor, a customer, exactly how your product works and why it works that way.

That second version is harder. It requires you to stay in the loop even when it is uncomfortable. It requires you to learn enough to ask good questions. It requires you to read the plan before you click approve.

I think the second version is the one that builds companies that last.

Advice for Non-Technical Founders Using AI Agents in 2026

If you are building with AI tools right now, and I hope you are, I want to say this directly to you.

The people selling you full automation are not wrong that it is possible. They are wrong that it is wise.

You got into this to build something. A real thing that serves real people and solves a real problem. That thing deserves your judgment. It deserves your understanding. It deserves a human being who cares about it enough to stay in the loop, ask the hard questions, and make decisions that are not just technically correct but strategically right.

AI tools are genuinely remarkable. I would not have been able to build Theanna's frontend without them. I would not have been able to ship as fast, iterate as quickly, or communicate as clearly with my engineering team. They are not the enemy of thoughtful building. They are, when used well, the enabler of it.

But "when used well" is doing a lot of work in that sentence.

- Stay involved. Do not outsource your understanding of your own product to an agent.

- Learn enough to evaluate what gets built. You do not need to write code, but you need to understand what the code is doing.

- Know the difference between your development environment and your production environment. Know the difference between a feature that works and a feature that scales.

- Do not let an agent make decisions that require judgment just because an agent can execute them faster.

A Message to the Tech Industry About How AI Tools Are Sold

This one is a little different, because I know some of you building the tools will read this.

I am not asking you to slow down. I am not asking you to build less capable agents. I think the trajectory of this technology is extraordinary and I want to see it continue.

What I am asking is for more honesty in how these tools are sold and positioned.

When you tell a founder that their human review process is a bottleneck, you are making a values claim disguised as an efficiency claim. You are saying: speed of deployment matters more than depth of understanding. Throughput matters more than judgment. Automation is better than involvement.

Those are not universal truths. They are choices. And for a lot of founders, especially early-stage ones who are still figuring out what they are building and why, they are the wrong choices.

The most dangerous version of the AI hype cycle is the one where we convince a generation of founders that understanding their own product is optional. That the right tool can replace the need to think carefully about what you are building. That judgment is a legacy concept from an era before agents could just handle it.

I do not believe that. I do not think most experienced builders believe it either. But the messaging in the market often points the other way, and founders, especially non-technical ones who already feel like they are playing catch-up, are susceptible to it.

You can build remarkable tools and still be honest about what they cannot do. In fact, I would argue that is the only sustainable way to build trust in this space long term.

My Humans Are Not the Bottleneck. They Are the Product.

I have thought about responding to that email. I probably will not, because there is no human on the other end to have a real conversation with. That is kind of the point.

But if I did respond, here is what I would say.

My humans are not the bottleneck. My humans are the reason my product is coherent. They are the reason I can explain, in plain language, what we built and why. They are the reason when something breaks, we know where to look. They are the reason our codebase is something I am proud of rather than something I am afraid to touch.

The agent that emailed me optimized for finding a lead. It found someone who uses AI tools and might want to automate more. What it could not read, what it fundamentally cannot read, is intent. Context. Strategy. The difference between a process that looks inefficient and a process that is working exactly as designed.

That gap between what can be automated and what requires judgment is not closing as fast as the pitch decks suggest. And until it does, I will keep my humans exactly where they are.

In the loop. In the decisions. In the work.

That is not a bottleneck. That is how you build something that lasts.

Key Takeaways: AI Agents vs Human Judgment in Software Development

- AI agents optimize for objectives, not judgment. An agent can execute a task flawlessly and still make the wrong strategic decision because it cannot read context the way a human can.

- Human-in-the-loop development is intentional, not inefficient. Engineers who review code, question architecture, and push back on bad ideas are doing product development, not creating bottlenecks.

- Speed without understanding creates tech debt. Founders who ship AI-generated code without understanding it end up with codebases that no one, including future engineers, can maintain or explain.

- The real bottleneck is unthoughtful automation. Products are scaling the wrong thing faster than ever because the feedback loop between "does this serve the user" and "ship it" has collapsed.

- AI tools are remarkable when used with intention. Claude Code, Lovable, and other AI coding tools are not the enemy of thoughtful building. They are enablers of it, when founders stay involved.

- Non-technical founders should learn to evaluate, not just approve. You do not need to write code. But you do need to understand what the code is doing and why.

- Full automation is possible. That does not make it wise. The people selling you on removing humans from the loop are making a values claim disguised as an efficiency claim.

Want to build with AI tools the right way?

Theanna helps non-technical female founders build, launch, and grow tech companies with human judgment at the center.

Hi! I'm Nomiki, founder of Theanna. I'm building a platform for non-technical female founders who want to build, launch, and grow tech companies. I document my journey to $1M ARR here on this blog. The real numbers, the real process, the real lessons. If you want to follow along, subscribe to Building Theanna.